What is OData? This should be first question if you're new to this term. Here is the little brief about it -

“The

Open Data Protocol (OData) is a Web protocol for querying and updating data that provides a way to unlock your data and free it from silos that exist in applications today. OData does this by applying and building upon Web technologies such as

HTTP,

Atom Publishing Protocol (AtomPub) and

JSONto provide access to information from a variety of applications, services, and stores. The protocol emerged from experiences implementing AtomPub clients and servers in a variety of products over the past several years. OData is being used to expose and access information from a variety of sources including, but not limited to, relational databases, file systems, content management systems and traditional Web sites.”

Want to know more like What, Why and How? visit here

http://www.odata.org/introductionNow once you go through the specification of OData you’ll get to know where it can fit into your requirement when designing service architecture.

Let’s not deep digging these decision making arguments and get back to the business i.e. How can we create an OData service using WCF DataService?

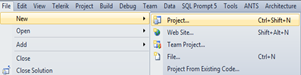

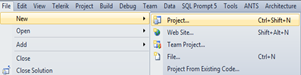

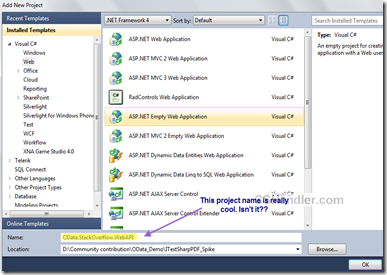

So go ahead and Launch your VisualStudio 2010 (or 2012 if you have that one installed. its available as RC version for free when I’m writing this article.)

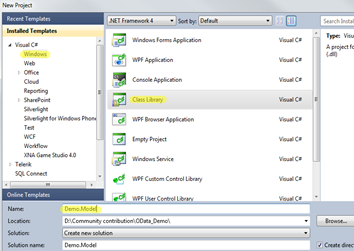

Now create a web project or rather add a class library project (Lets keep little layering and separation of business)

below are simple and step by step walkthrough, keeping in mind someday some beginner(new to VS) might be having trouble with written instructions.

Name it as Demo.Model as we’re creating a sample service and this will work as Model (Data) for our service.

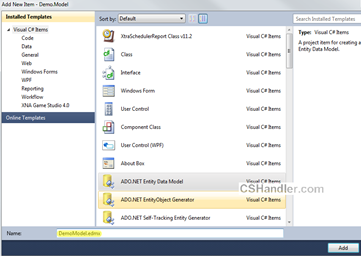

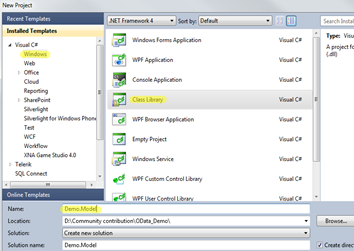

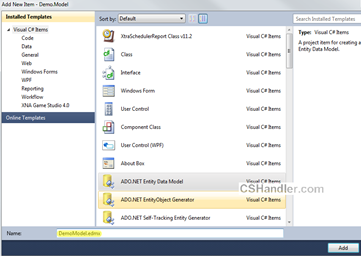

Now go ahead and add a new item –> ADO.Net Entity

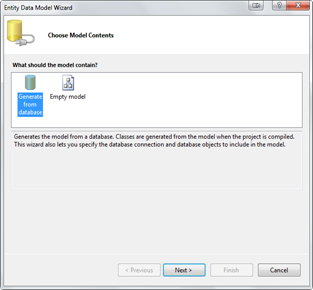

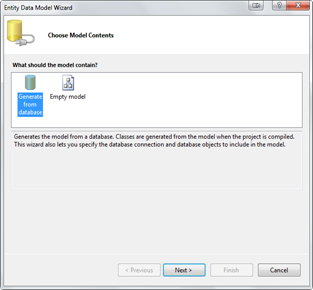

Name it as DemoModel.edmx. when you click Add, a popup will be waiting for your input. So either you can generate your entities from database or you can just create an empty model which further can be used to generate your database. Make your choice.

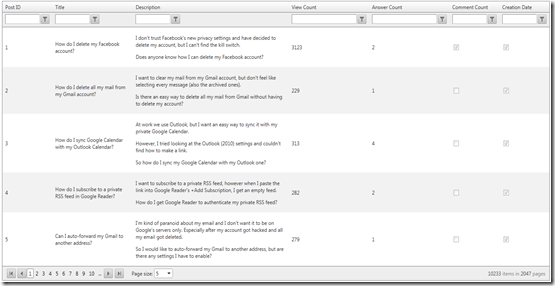

But we’re simply going to use the existing database as I already have nice to have test database from Stackoverflow dumps.

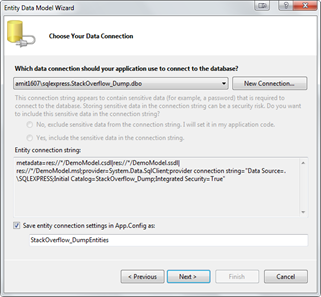

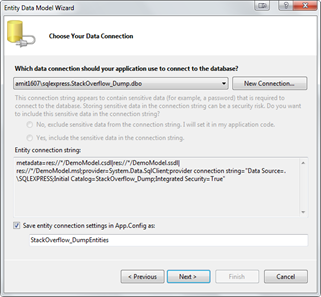

Click Next> and get your connection string from NewConnection and you’re ready to click Next> again ..

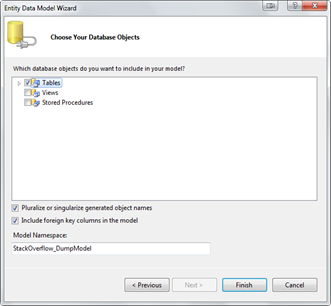

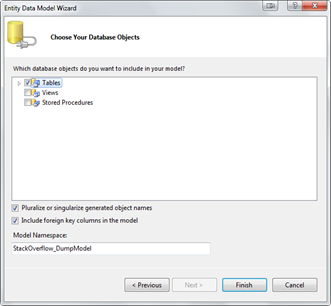

select your Database object that you want to include into your Entities.

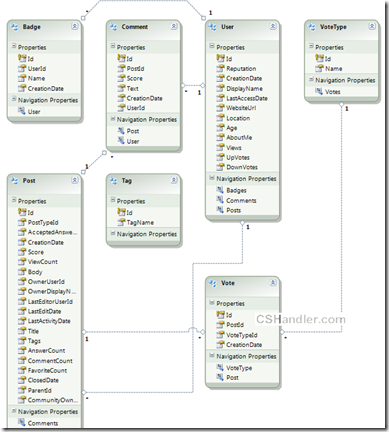

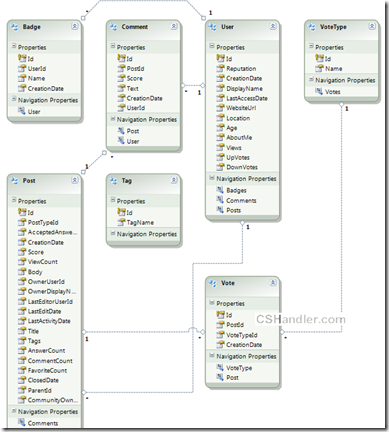

For creating the OData service we only need the Tables. So after clicking the finish your Entities diagram should look like this. Here’s the important note; “The relationship between the entities should be well defined because the OData will be searching/locating your related entities based on your Query(

Know about URI Queries)

We’re close enough to finish the job more than 50% work is done to create the service. Forget about the time when we used to define/create/use the client proxy and other configuration settings including creating the Methods for eveytype of data required.

(Note:- This post will only show you how to get the data from OData service. I can write some other time about Create, Delete, Edit using PUT, DELTE, POST http requests.)

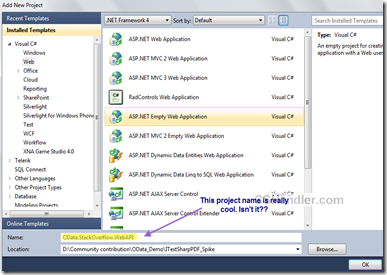

Now Go ahead and add new Web project (Should choose Asp.net Empty website) from the templates

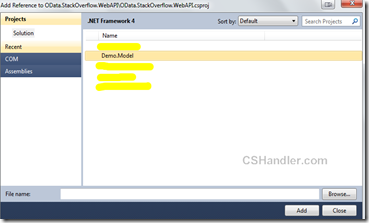

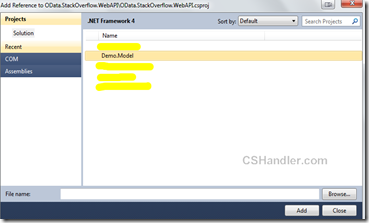

Add the project reference Demo.Model to the newly added website.

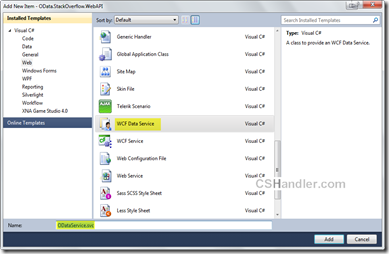

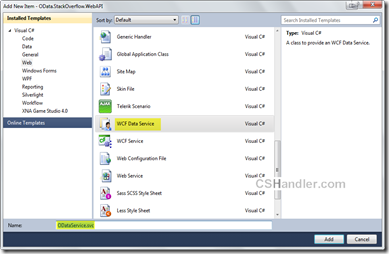

Now add a new item in the web project i.e. WCF Data service.

Now open the code behind file of your service file and write this much of code.

- [JSONPSupportBehavior]

- //[System.ServiceModel.ServiceBehavior(IncludeExceptionDetailInFaults = true)] //- for debugging

- public class Service : DataService<StackOverflow_DumpEntities>

- {

- // This method is called only once to initialize service-wide policies.

- public static void InitializeService(DataServiceConfiguration config)

- {

- config.SetEntitySetAccessRule("*", EntitySetRights.AllRead);

- //Set a reasonable paging site

- config.SetEntitySetPageSize("*", 25);

- //config.UseVerboseErrors = true; //- for debugging

- config.DataServiceBehavior.MaxProtocolVersion = DataServiceProtocolVersion.V2;

- }

-

- /// <summary>

- /// Called when [start processing request]. Just to add some caching

- /// </summary>

- /// <param name="args">The args.</param>

- protected override void OnStartProcessingRequest(ProcessRequestArgs args)

- {

- base.OnStartProcessingRequest(args);

- //Cache for a minute based on querystring

- HttpContext context = HttpContext.Current;

- HttpCachePolicy c = HttpContext.Current.Response.Cache;

- c.SetCacheability(HttpCacheability.ServerAndPrivate);

- c.SetExpires(HttpContext.Current.Timestamp.AddSeconds(60));

- c.VaryByHeaders["Accept"] = true;

- c.VaryByHeaders["Accept-Charset"] = true;

- c.VaryByHeaders["Accept-Encoding"] = true;

- c.VaryByParams["*"] = true;

- }

-

- /// <summary>

- /// Sample custom method that you OData also supports

- /// </summary>

- /// <returns></returns>

- [WebGet]

- public IQueryable<Post> GetPopularPosts()

- {

- var popularPosts =

- (from p in this.CurrentDataSource.Posts orderby p.ViewCount select p).Take(20);

-

- return popularPosts;

- }

- }

Here we added some default configuration settings in InitializeService() method. Take a note that I’m using

DataServiceProtocolVersion.V3 version. This is latest version for OData protocol in .Net, you can get it by installing the SP for WCF 5.0 (

WCF Data Services 5.0 for OData V3) and replacing the references of System.Data.Services, System.Data.Services.Client to Microsoft.Data.Services, Microsoft.Data.Services.Client.

Otherwise not a big deal you can still have your

DataServiceProtocolVersion.V2 version and It works just fine.

Next in the above snippet I’ve added some cache support by overriding the method OnStartProcessingRequest().

And also I’m not forgetting about the

JSONPSupportBehavior attribute on the class. This is open source library that enables your data service to have JSONP support.

First of all you should know why and what JSONP do over JSON. keeping it simple and brief:

i) Cross domain support

ii) Embedding the JSON inside the callback function call.

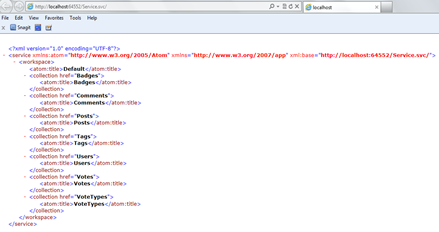

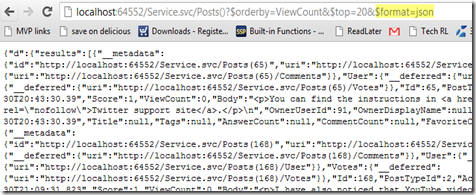

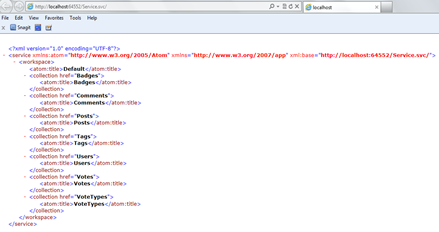

Hence.. We’re done creating the service. Wondered!! don't be. Now let’s run this project and see what we’ve got. If everything goes fine then you’ll get it running like this.

Now here’s the interesting part.

Play with this service and see the OData power. Now you’ve seen that we didn’t have any custom method implementation here and we’ll be directly working with our entities using the URL and getting the returned format as Atom/JSON. :) .. Sounds cool?

If you have LINQPAD installed then launch it otherwise download and install it from

here.

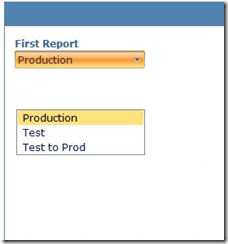

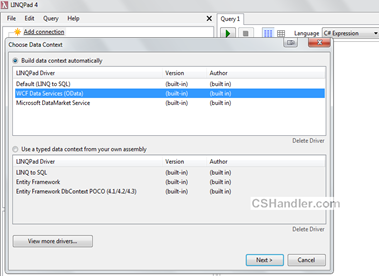

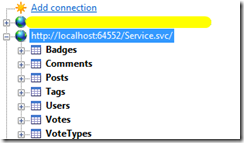

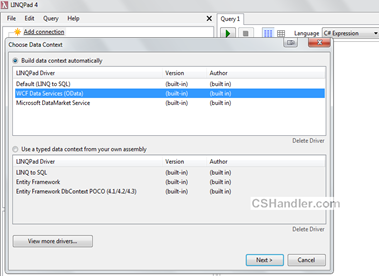

Do you know your LINQPAD supports the ODataServices. So we’ll add a new connection and select WCF Data service as our datasource.

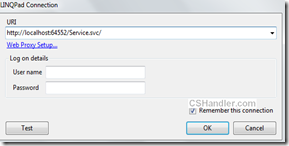

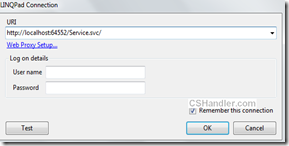

Now click next and enter your service URL.(Make sure the service that we just created is up and running)

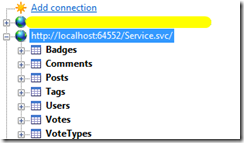

The moment you click OK you’ll see your all entities in the Connection panel.

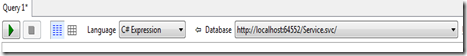

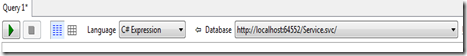

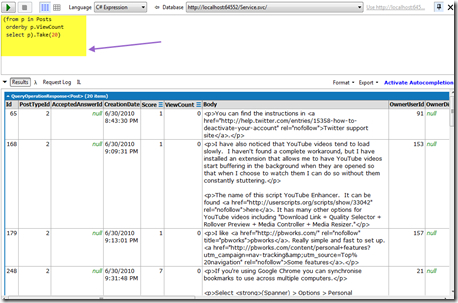

Select your service in the Editor and start typing your LINQ queries

I need top 20 posts order by View count.. blah blah. So here is my LINQ query.

- (from p in Posts

- orderby p.ViewCount

- select p).Take(20)

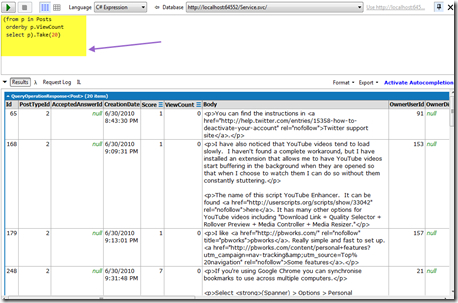

Now run this with LINQPAD and see the results.

Similary you can write any nested, complex LINQ queries on your ODataService.

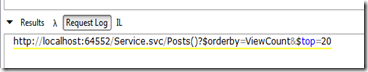

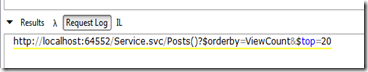

Now here is the Key of this, The root of the whole post ..

This is OData protocol URI conventions that helped you filtering, ordering your data from entities. You in the end of this article you’ve just created and API like Netflix, Twitter or anyother public API.

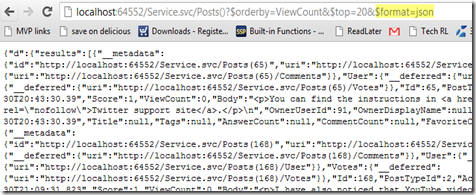

Moreover, We used JSONPSupportBehavior so you can just add one more parameter to above url and enter it in browser and go..

In the next post we’ll see how to consume this service simple in a plain HTML page using Javascript. Keep an eye on the feeds.. stay in touch.